Redesigning a website is usually about making its experience better. But change doesn’t guarantee better, just different. This is why user experience (UX) designers focus so much on user research. The more you know about user needs, as well as business and other requirements, the more you can eliminate some of that uncertainty about the impact of a new design. However, you will never know for sure how a design choice will perform until it’s used with real users in real life. This is a nerve-wracking reality. A UX designer nor most project stakeholders are comfortable taking the risk of just changing something and seeing how it goes. Enter A/B testing.

What is A/B Testing?

A/B testing, or split testing, involves utilizing live website traffic to help decide whether a proposed change is worth making – without actually taking the risk of changing your product. To conduct an A/B test the current version of a webpage is kept and a second version of it is built that includes the variable that researchers are interested in learning about. Then the live traffic to the original page is split in half with one half randomly being assigned to the original (the control) and the other to the new page (the variation). Finally, researchers examine the data gathered during the testing period from both versions and compare it to see how the proposed change affected user behavior (Whitenton, 2019).

There are many tools available to help build and execute an A/B test, including platforms like Google Optimize which is free and easy to set up with Google Analytics, Optimizely, VWO, and HubSpot (Chi, 2019). Some content management systems and digital experience platforms may even have built-in functionality for running A/B tests so be sure to investigate that as a possible benefit when choosing one for your site.

A/B testing as applied to content is often discussed as an option for improving marketing or sales efforts. For UX improvements you’re often applying it to design elements. For example, you might want to test how the color of a call-to-action button impacts donations or how the placement of an email subscription field affects submissions. Whatever you choose to focus on in an A/B test, there are 2 important things to remember:

- Only test one variable at a time

If you change more than one element of a design, then you can never be sure which element is responsible for the change in user behavior (PlayBookUX, 2019). For example, you may want to know if users are more willing to make a purchase when given a red button than the current green one. If, though, the red button is at the top of the page in one version and the green one is at the bottom in the current version and the red one produces more sales, was it the color or the placement change that caused the improvement? Simply changing your current button, that’s located at the bottom of the page, to red won’t necessarily guarantee a better outcome.

In this case, the A/B test was a waste of time in terms of producing helpful insights. If you’re interested in testing multiple variables you should do a series of A/B tests or a multivariant test (A/B/C/D/E/etc.). Multivariant tests, though, require high website traffic or longer test periods in order to guarantee that splitting traffic provides a reliable amount of data for each test segment. As a result, it often isn’t as useful a research technique for smaller, less-trafficked sites (Moran, 2019). - Create a hypothesis: know what you want to measure, how you’re going to measure it, and why it’s important

You can’t focus on testing for everything at the same time. Any design change you want to make should have a reason: increase conversions (sales, donations, customer data submissions), decrease bounce rate, etc. This reason should preferably tie back to user, marketing, and/or business requirements in some way. Improving user experience because it’s the right thing to do is a respectable goal, but UX designers are more often tasked with doing it to help a business’ or organization’s bottom line, so keep this in mind when justifying testing.

With your what and why determined you now need your how. If you’re familiar with Google Analytics, then you know a webpage can produce a lot of data. For any A/B test you must decide which data points are the most relevant. For example, in the previous scenario of the red versus green button, you might want to look at the amount of referral traffic from the page to the checkout page that resulted in sales or at the average order amount of customers who came from one version of the page compared to the other. Clarity about what specifically you want to get out of an A/B test is important for producing usable insights (Brown, 2017).

Why A/B Testing is Useful

As previously discussed, A/B testing helps lower the risk of implementing design changes through testing with live website traffic, but there are other reasons for using this research technique (Brightedge).

- Enables incremental improvements

Instead of waiting until your next big redesign to fix problems or improve your site, you can make user-centered design changes of all sizes throughout the lifecycle of a product with A/B testing. - Produces data-driven decisions

If you or stakeholders are on the fence about the return on investment (ROI) of a design change then the data from an A/B test can be the tipping point that helps push you in one direction or the other. - Is scalable to resources

As long as someone knows the scientific method involved in A/B testing and has access to an A/B testing tool than doing it and analyzing results is pretty straightforward. Multinational companies can continually do tests on every page or design element while small businesses can conduct tests to help with problems as they arise. Coming up with the idea for the design change is usually the harder part of the process. - Helps with prioritizing

This is especially important if your time or resources are limited. Running A/B tests on a number of possible design changes can tell you which ones are most worth investing in right now and which ones are nice to have should you have more resources later.

A/B TESTING EXAMPLES

This user research technique is widely used for all types of websites in all types and sizes of businesses. Here are 3 examples in which A/B testing helped guide beneficial design choices.

Unbounce

Software company Unbounce wondered if people would prefer the more traditional ask of providing an email address in order to download a free ebook or the option to tweet about it in exchange for the download. The company was torn because both approaches could benefit them in different ways: increased reach for email marketing or increased social exposure. So, they created an A/B test with the landing page version (left) featuring the email call to action (CTA) and the new one (right) with the tweet CTA to learn which one users were drawn to more. In the end, email won with a 24% higher conversion rate compared to the trendy idea of tweeting (Gardner, 2012).

Humana

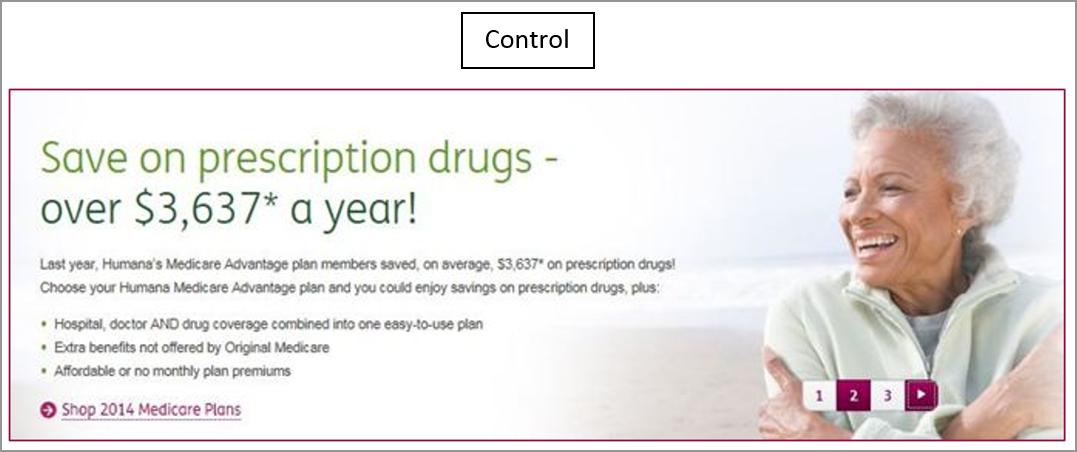

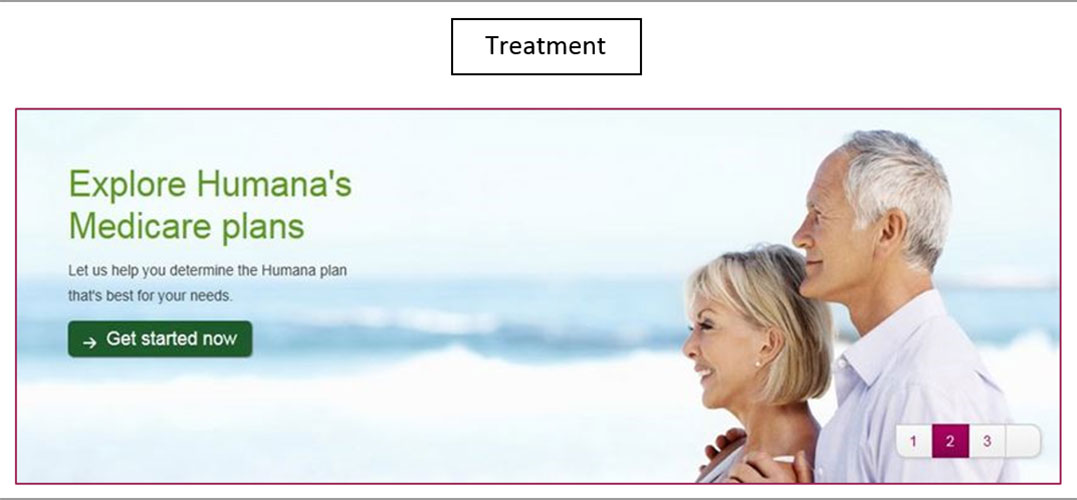

Healthcare insurance company Humana wanted to optimize their landing page banners for click-throughs. They hypothesized that visually cutting down the amount of copy in the banner and adding a clear CTA button would help. So, they ran an A/B test with the original version (left) and one built with their new design ideas (right). The new one ended up achieving a 433% increase in click-throughs.

This test was so successful that they ran a subsequent A/B test using the new design as the control and tweaking the CTA language from “Get Started” to “Shop” as pictured above. This one small change provided an additional 192% increase in click-throughs (Johnson, 2016).

Clinton bush Haiti Foundation

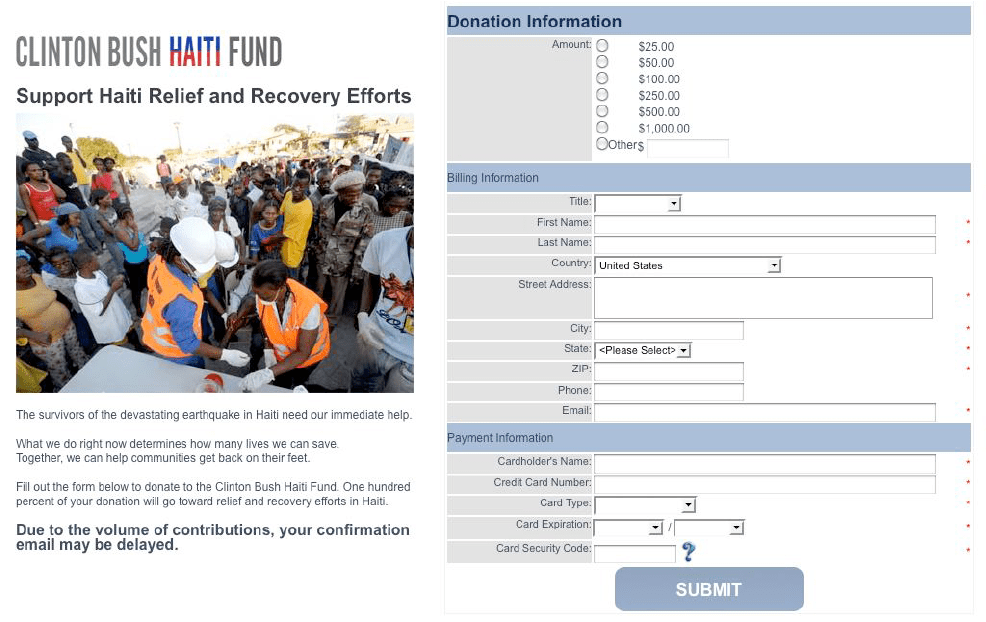

After the historically devastating earthquake in Haiti in 2010 the Clinton Bush Haiti Foundation was created to help within only a few days of the disaster. Optimizely was contacted to help with designing a donation page to increase conversions. The original page was just a basic form for donors to fill out. Optimizely thought adding a photo would humanize it and emotionally appeal more to people. They ran an A/B test of the original page (left) and the same design with a photo added to the top (right).

However, the test strangely revealed that the proposed change decreased the average donation per pageview. The Optimizely team wondered if this was because the form was so long that the addition of the photo pushed the CTA button down the page out of view. In this era before wide smartphone adoption, users were less primed for scrolling. So, the team conducted another test with the current version and a side-by-side layout, pictured above, that featured a donation CTA at the top of the page and a shorter form. This new design, with fewer hurdles to donating and an emotional appeal, produced an 8% increase in donations per page view (Heinz, 2013).

A/B Testing Isn’t magic

These examples show some pretty big successes with A/B testing. However, the results of A/B tests won’t always be as clear as these. Sometimes even if one choice is shown to make more of a difference compared to another you’ll still need to decide if it’s enough of a difference to bother with making a design change. And even if you are willing to invest resources in making every tiny, incremental change, you might not want to according to Nielsen Norman Group.

They warn that overusing A/B testing in an attempt to perfect every design detail can backfire. Instead of a bunch of incremental design changes that all had good testing outcomes adding up to a super usable product, they instead can start to cancel each other out or interact in negative ways creating a worse website experience than you started with (Laubheimer, 2020). Plus, no matter how successful a test is it won’t tell you why users prefer something. You’ll still need to conduct qualitative research to discover that.

All of this is to say that A/B testing, for all its buzzword popularity, is still just another user research technique and it has limitations. Use it at the right time for the right types of insight and it will serve you well.

References

Brightedge. What is A/B testing?. Retrieved from https://www.brightedge.com/glossary/benefits-recommendations-ab-testing

Brown, J.L. (2017, February 21). 5 steps to quick-start A/B testing. UX Booth. Retrieved from https://www.uxbooth.com/articles/5-steps-to-quick-start-ab-testing/

Chi, C. ( 2019, December 16). 8 of the best A/B testing tools for 2020. HubSpot. Retrieved from https://blog.hubspot.com/marketing/a-b-testing-tools

Gardner, O. (2012, June 27). Pay with a tweet vs. pay with an email. Unbounce. Retrieved from https://unbounce.com/a-b-testing/pay-with-a-tweet-or-email-case-study/

Heinz, B. (2013, November 23). How A/B testing empowers relief efforts in the aftermath of Typhoon Haiyan. Optimizely. Retrieved from https://blog.optimizely.com/2013/11/21/ab-testing-technology-global-relief-efforts-typhoon-haiyan/

Johnson, A. (2016, March 23). Homepage optimization: Tips to ensure site banners maximize clickthrough and conversion. MECLABS. Retrieved from https://marketingexperiments.com/a-b-testing/how-humana-optimized-banners

Laubheimer, P. (2020, July 3). Don’t A/B test yourself off a cliff. Nielsen Norman Group. Retrieved from https://www.nngroup.com/videos/dont-ab-test-yourself-cliff/

Moran, K. (2019, May 10). A/B testing vs. multivariate testing. Nielsen Norman Group. Retrieved from https://www.nngroup.com/videos/a-b-testing-vs-multivariate/

PlaybookUX. (2019, April 9). What is A/B testing in design & user experience research? [Video]. YouTube. https://youtu.be/R1jAoOvWRN0

Whitenton, K. (2019, August 30). A/B testing 101. Nielsen Norman Group. Retrieved from https://www.nngroup.com/videos/ab-testing-101/